Vulnerabilities in cloud-sharing services stem from the usage of multiple cloud services because of which users need to keep adapting and adjusting their exceptions.

In part 1, I discussed some major vulnerabilities using cloud-sharing services caused. This included routine cloud usage leading to users opening emails from unknown addresses; complying with form-emails with no personalized messages or subject lines; clicking on unverifiable hyperlinks in emails, and violating cyber safety training drills that cautions against all the aforementioned actions. Security flaws in cloud-sharing services are, not in the user, but the developmental focus of various cloud services.

In part 1, I discussed some major vulnerabilities using cloud-sharing services caused. This included routine cloud usage leading to users opening emails from unknown addresses; complying with form-emails with no personalized messages or subject lines; clicking on unverifiable hyperlinks in emails, and violating cyber safety training drills that cautions against all the aforementioned actions. Security flaws in cloud-sharing services are, not in the user, but the developmental focus of various cloud services.

Different Authentication Considerations for Cloud Services

Some cloud services like Google Drive are focused on integrating their cloud offerings with their alphabet-soup of services. Others, such as Dropbox, are focused on creating a stand-alone cross-platform portal. Still others, such as Apple, are focused on increasing subscription revenues from their device user population.

In consequence, not only does each provider prioritize a different aspect of the sharing process, but this larger goal also comes before the user–who is nothing more than a potential revenue target.

Changing this means more than implementing more robust authentication protocols and encryption standards. These are necessary, but they do little to reduce vulnerabilities that are rooted in the user adaption process. If anything, they make users have to adapt to even more varying implementations. Improving resilience in cloud platforms cannot be done on a piece-meal basis; it requires a unified effort by cloud service providers.

Photo by Daniel Korpai on Unsplash

Integrating Different Cloud Services

Here, industry groups such as the Cloud Security Alliance can help by bringing together various cloud providers, and taking a holistic look into how users adapt to different cloud-use environments and estimate risk on them.

A big part of this endeavor will involve getting to the root of users’ Cyber Risk Beliefs (CRB): their mental assumptions about the risks of their online actions. We know from my research that many of these risk beliefs are inaccurate. For instance, many users mistakenly construe the HTTPS symbol to mean a website is authentic, or that a PDF document is more secure because they cannot edit it, than a Word document.

We need to understand how CRB manifest themselves in cloud environments. This involves answers to questions such as whether users believe that certain cloud services are more secure than others? And whether they think that such services render the sharing of documents or the sharing of certain types of documents through them safer?

Answers to such questions will reveal how users mentally orient to different cloud services, what they store on them, and how they react to files shared through them. For instance, if users believe a specific portal makes documents safe, they might be more willing to open files that purportedly come from such portals in a spear-phishing email. Beliefs such as these might also influence how users enable various apps on to cloud portals, what they store online, and how careful they are about their stored data. Because CRB influences the adaptive use of different cloud services, understanding them can help design a safer cloud user experience.

Improving user security on cloud platforms also requires the development of novel technical constraints. Since many social engineering attacks conceal malware in hyperlinks, cloud portals need to collaborate and develop a virtualized space in which all shared links are generated and deployed. This way, spoofing of hyperlinks or leading users to watering hole sites is far more difficult because the domains from which the links are generated would be more uniform and recognizable to users.

User Interfaces of Cloud Services

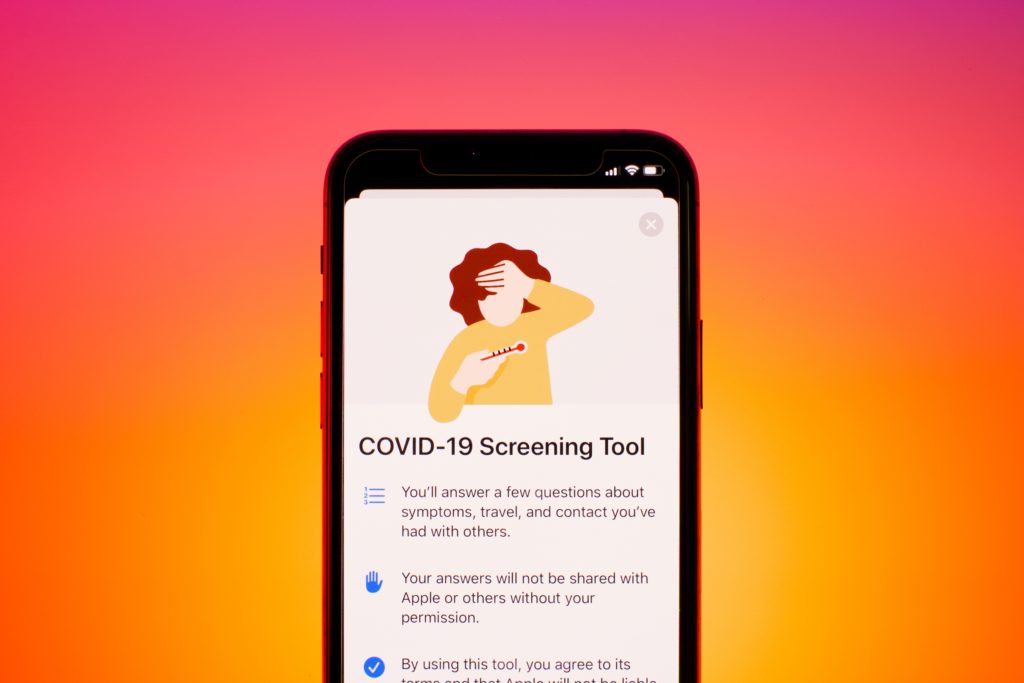

Yet another focus needs to be on improving user interface (UI) design. For now, the UI of file-sharing programs prioritizes convenience rather than safety. This is a bias that permeates the technology design community, and its most marked manifestation is in mobile apps where it is hard for users to assess the veracity of cloud-sharing emails and embedded hyperlinks.

To change this, UI should foster more personalization of the shared files. Users shouldn’t be permitted to share links without messages or subject lines and should be prompted to include a signature in the message. The design must also deemphasize the actions that users have to take and emphasize review, especially on mobile devices. This can be achieved by highlighting the user’s personalized message, by displaying the complete URL rather than shortening it, and by making the use of passwords for opening shared documents necessary.

UI design could also focus on integrating file-sharing portals with email services, so that links aren’t being generated from the portal directly, but are created from within email accounts that people are familiar with. This way, emails aren’t being sent from unknown virtual in-boxes, and personalization becomes easier.

Photo by John Schnobrich on Unsplash

The Cloud Is A Victim Of Its Own Success

Finally, our extant user training on email security is at odds with end-user cloud sharing behavior. Using the cloud today entails violating training-based knowledge, which over time, changes user perceptions of the validity of training. We must update training to emphasize safety in the sharing and receiving of cloud files. This means foster newer norms and best practices, such as using passwords and personalized messages while sharing. It also includes teaching users how to gauge whether a shared hyperlink is a spoof and the approaches to deploying such links in virtualized environments, to contain any potential damage.

The cloud is becoming a victim of its own success. With many more players entering the market, the user experience is getting more fragmented and enhancing vulnerabilities because of the different ways in which they implement each platform. Today, there are hundreds of providers offering different cloud services, with many more coming online.

The industry is slated to grow even more, because we have barely tapped the overall potential market–with anywhere from 30 to 90 percent of the all organizations in the US, Europe, and Asia, yet to adopt it. Thus, the user issues are only likely to increase as more providers and users enter the space.

Correcting this is now more important than ever. Because a single major breach can erode user trust in the entire cloud experience–forever changing the cloud usage landscape.

*A version of this post appeared here: https://blog.ipswitch.com/data-security-in-the-cloud-part-2